The Next Frontier: Data Mining Social Media Images

Categories

Imagine what you could measure about the world if you had access to all the photos ever taken. This is a question that fascinates Noah Snavely, associate professor of computer science at Cornell Tech. An abundance of tools can mine data from web-based text, but for many years, the computer vision community has thought of images as the ‘dark matter’ of the web.

Snavely is currently working in the Cornell Graphics and Vision Group, where he develops innovative technologies for unlocking this vast data source. But his fascination with computer vision goes back to his student days.

3D Modeling from 2D Web Images

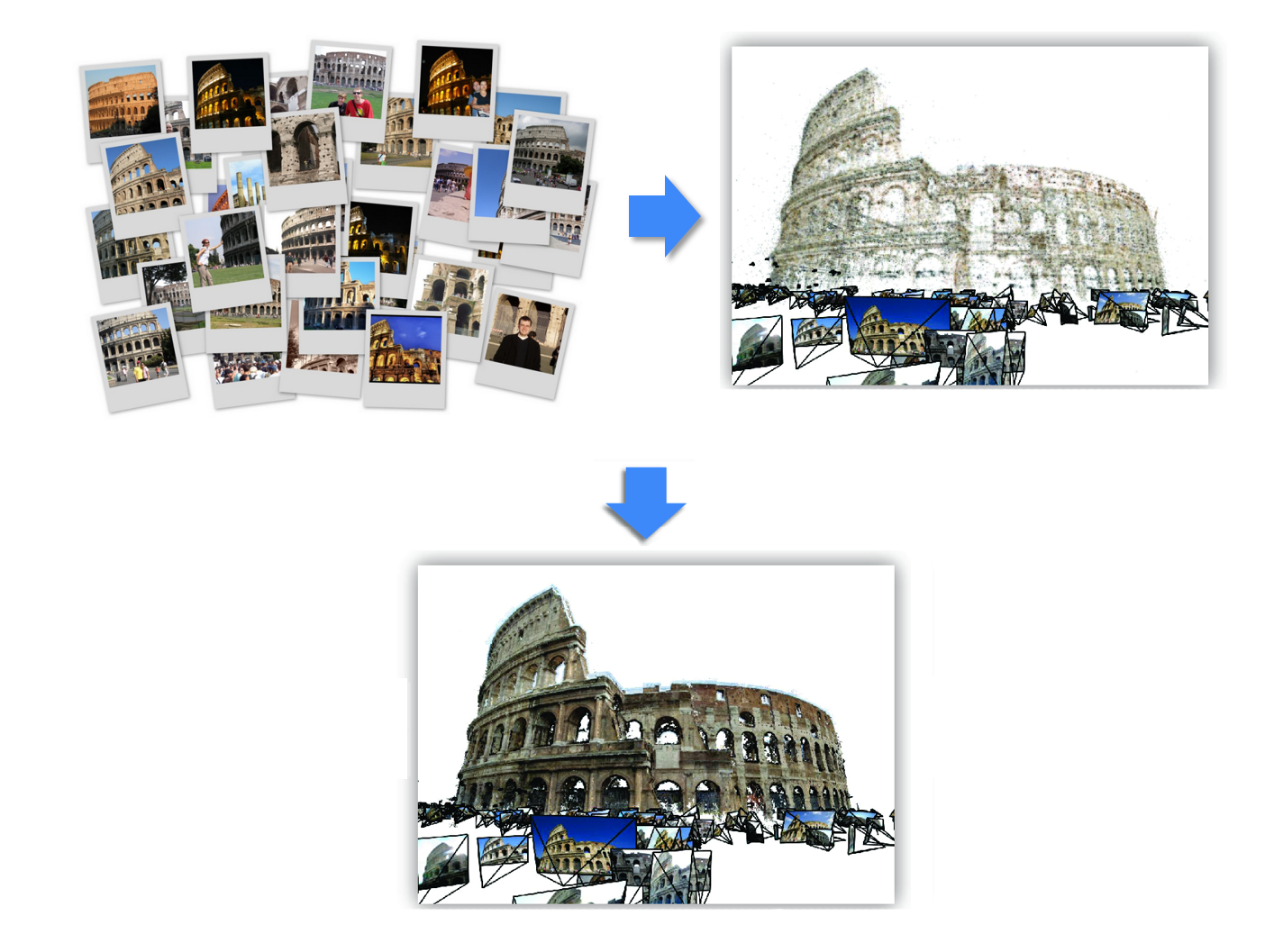

As a computer science undergrad at the University of Arizona, Snavely become drawn to the challenges of 3D vision, which combines computer vision and graphics, and can be used to infer 3D models of the world from 2D images.

With the launch of photo sharing sites, such as Flickr, Snavely gained access to vast data sets that propelled his work into new territories. He began building 3D models from 2D images downloaded from the Internet.

Every day, he says, tourists take photos, at different times and from different angles, of famous landmarks and upload them to the web.

Snavely uses tourist photos taken at different angles to construct 3D models.

“There is no coordination between them. They’re just like an unorganized set of photos,” explains Snavely “But from that we can reconstruct a 3D model of that place, and that works for any landmark where people take lots of photos.”

The applications of 3D modeling are many, including measuring and reconstruction. But Snavely was interested in using it to visualize what it is like to be in a location and to be able to move around places and landmarks, like New York City or the Statue of Liberty, observing them from different angles. He wanted to explore how people could experience those landmarks as if they were there.

Precision Image Localization

His innovative work on 3D modeling led to other projects. Snavely developed an image localization technique which could analyze a photograph from anywhere in the world and identify its latitude and longitude, as well as the exact orientation of the camera when the shot was taken by quickly matching to a database of known images. The project was a research collaboration with Cornell Tech Dean Dan Huttenlocher and PhD student Yunpeng Li.

Building on this, in 2012 he co-founded a company called TaggPic with his wife Beth Xie. This technology allowed the position and orientation of photographs to be determined by referencing them against 3D models. The company was ultimately acquired by Google.

“Let’s say I built the model of the Statue of Liberty. Then I could take a new image and, based on how it relates to the 3D model, I could estimate exactly where that image must have been taken to see that particular view of the Statue of Liberty,” Snavely explains.

This technology could also allow for precise tracking and graphics overlay in Virtual Reality (VR) and Augmented Reality (AR), which can be used to provide dynamic tourist and directional information.

To draw graphics on top of a camera image and have it “look like it’s stuck to the world” requires extremely accurate image localization, describes Snavely.

Social Media Images: An Untapped Data Source

Now at Cornell Tech, Snavely has turned his attention to the valuable data locked into social media images. Billions of photographs are uploaded to the web every day and while there are many tools for mining data from text, like tweets and social posts, images are the next frontier, he says.

“They are a large set of data. It’s largely untapped because we don’t have good tools for analyzing it but there’s a lot of information locked into it.”

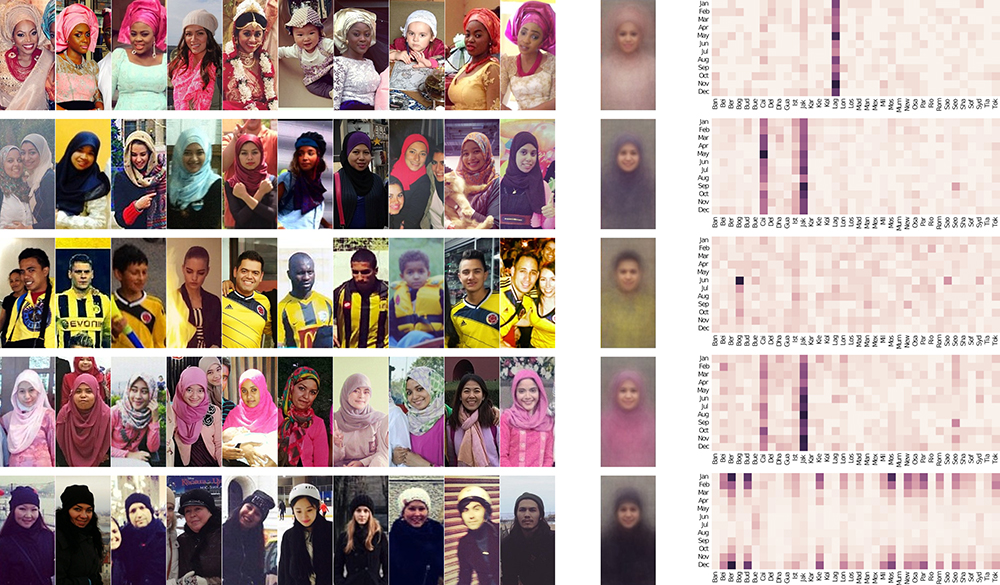

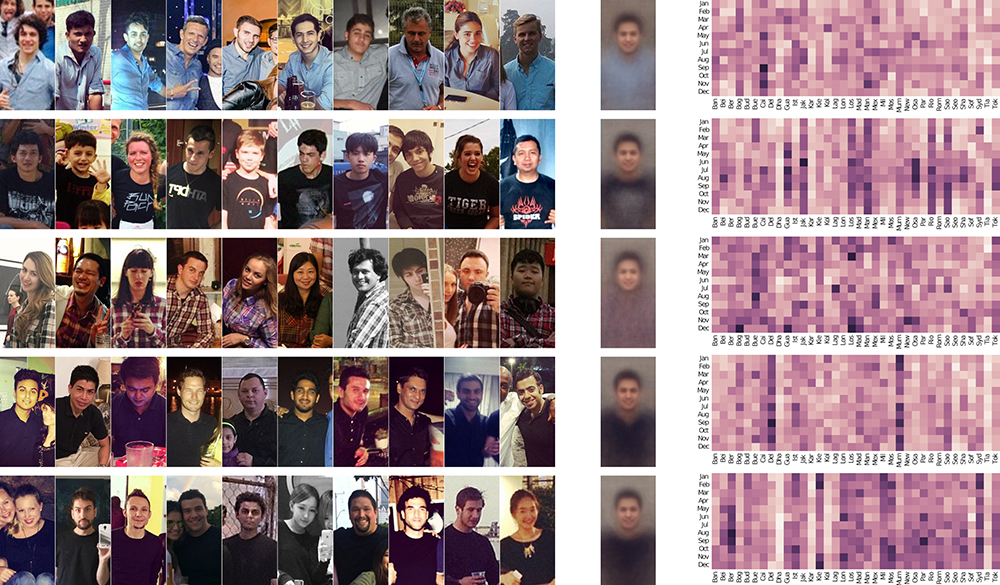

Snavely is starting by looking at what we can learn about fashion trends by tapping into this data source.

Snavely presenting “Street Style” at Open Studio.

“How common is it for people to wear red clothing in photographs? Or glasses and hats and ties, any fashion?” he asks. “Does New York City influence other places in terms of fashion? Does Paris influence the US?”

To date, answering such questions has been very painstaking, involving interviews and observations. “The idea is that social media images will make all this much easier,” he says.

Snavely and his team are devising frameworks for analyzing these images; developing technologies that takes raw pixels and extracts facts from them.

“We’re using machine learning for that, deep learning techniques and methods in particular. We want it to be fast and scalable.”

Once they have laid the groundwork, they will look at building a front end and exploration interface on top of that.

Snavely and his team found that blue collared shirts, plaid shirts and black t-shirts were the most common styles around the world.

The Start of Something New

Snavely first came to Cornell in 2009, after he had completed his PhD at the University of Washington in Seattle. He was on leave at Google in 2014 and 2015, before returning to Cornell. Last year, he moved to Cornell Tech from the Ithaca campus.

He wants his work to have real-life applications, he explains, and was attracted by Cornell Tech’s mission of engagement. And the intimate, friendly environment makes it easy to find collaborators in fields such as graphics and machine learning. Perhaps unsurprisingly, this pioneering thinker also relishes being part of something new.

“Being here close to the start of something is very exciting to me: trying to define what the place is and being a part of that. The culture is great,” he says.